Opengl Reading in Ppm Image Is Static

Return Multimedia in Pure C

nullprogram.com/blog/2017/11/03/

In a previous commodity I demonstrated video filtering with C and a unix pipeline. Cheers to the ubiquitous support for the ridiculously elementary Netpbm formats — specifically the "Portable PixMap" (.ppm, P6) binary format — it's piddling to parse and produce image data in any language without prototype libraries. Video decoders and encoders at the ends of the pipeline practice the heavy lifting of processing the complicated video formats actually used to shop and transmit video.

Naturally this same technique can exist used to produce new video in a simple program. All that's needed are a few functions to render artifacts — lines, shapes, etc. — to an RGB buffer. With a scrap of basic sound synthesis, the same concept can be applied to create sound in a separate sound stream — in this example using the simple (but non as simple every bit Netpbm) WAV format. Put them together and a small, standalone program can create multimedia.

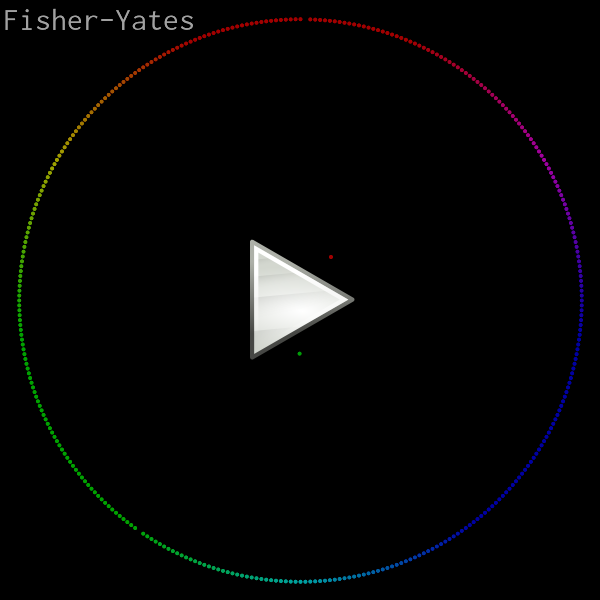

Here's the demonstration video I'll be going through in this article. It animates and visualizes various in-place sorting algorithms (see likewise). The elements are rendered as colored dots, ordered past hue, with red at 12 o'clock. A dot's distance from the eye is proportional to its corresponding element's distance from its right position. Each dot emits a sinusoidal tone with a unique frequency when it swaps places in a particular frame.

Original credit for this visualization concept goes to w0rthy.

All of the source code (less than 600 lines of C), ready to run, can be institute here:

- https://github.com/skeeto/sort-circumvolve

On whatsoever modern calculator, rendering is real-time, even at 60 FPS, then yous may exist able to pipe the programme'southward output straight into your media player of choice. (If non, consider getting a better media player!)

$ ./sort | mpv --no-right-pts --fps=threescore - VLC requires some assistance from ppmtoy4m:

$ ./sort | ppmtoy4m -F60:1 | vlc - Or you can but encode information technology to another format. Recent versions of libavformat can input PPM images directly, which means x264 can read the plan's output direct:

$ ./sort | x264 --fps 60 -o video.mp4 /dev/stdin By default there is no audio output. I wish there was a dainty fashion to embed audio with the video stream, simply this requires a container and that would destroy all the simplicity of this project. So instead, the -a choice captures the sound in a split file. Use ffmpeg to combine the audio and video into a single media file:

$ ./sort -a audio.wav | x264 --fps lx -o video.mp4 /dev/stdin $ ffmpeg -i video.mp4 -i audio.wav -vcodec copy -acodec mp3 \ combined.mp4 You might think you lot'll exist clever by using mkfifo (i.eastward. a named pipe) to pipe both audio and video into ffmpeg at the same fourth dimension. This volition only result in a deadlock since neither programme is prepared for this. 1 will be blocked writing one stream while the other is blocked reading on the other stream.

Several years ago my intern and I used the exact aforementioned pure C rendering technique to produce these raytracer videos:

I as well used this technique to illustrate gap buffers.

Pixel format and rendering

This program really only has one purpose: rendering a sorting video with a fixed, square resolution. So rather than write generic image rendering functions, some assumptions will be hard coded. For example, the video size will just be hard coded and causeless foursquare, making it simpler and faster. I chose 800x800 as the default:

Rather than define some sort of color struct with red, green, and blue fields, color volition exist represented by a 24-bit integer (long). I arbitrarily chose reddish to be the most significant 8 bits. This has cypher to do with the order of the individual channels in Netpbm since these integers are never dumped out. (This would have stupid byte-lodge issues anyhow.) "Color literals" are particularly convenient and familiar in this format. For example, the abiding for pink: 0xff7f7fUL.

In practise the color channels will be operated upon separately, and so here are a couple of helper functions to convert the channels between this format and normalized floats (0.0–1.0).

static void rgb_split ( unsigned long c , float * r , float * g , float * b ) { * r = (( c >> xvi ) / 255 . 0 f ); * g = ((( c >> 8 ) & 0xff ) / 255 . 0 f ); * b = (( c & 0xff ) / 255 . 0 f ); } static unsigned long rgb_join ( bladder r , float thousand , bladder b ) { unsigned long ir = roundf ( r * 255 . 0 f ); unsigned long ig = roundf ( g * 255 . 0 f ); unsigned long ib = roundf ( b * 255 . 0 f ); return ( ir << 16 ) | ( ig << 8 ) | ib ; } Originally I decided the integer grade would be sRGB, and these functions handled the conversion to and from sRGB. Since it had no noticeable upshot on the output video, I discarded it. In more than sophisticated rendering y'all may want to take this into account.

The RGB buffer where images are rendered is just a plain old byte buffer with the same pixel format every bit PPM. The ppm_set() role writes a color to a particular pixel in the buffer, assumed to be Southward by Southward pixels. The complement to this function is ppm_get(), which will exist needed for blending.

static void ppm_set ( unsigned char * buf , int x , int y , unsigned long color ) { buf [ y * S * 3 + x * 3 + 0 ] = color >> 16 ; buf [ y * S * 3 + x * three + 1 ] = color >> 8 ; buf [ y * Due south * 3 + ten * three + 2 ] = color >> 0 ; } static unsigned long ppm_get ( unsigned char * buf , int 10 , int y ) { unsigned long r = buf [ y * S * three + 10 * 3 + 0 ]; unsigned long yard = buf [ y * Due south * 3 + ten * three + 1 ]; unsigned long b = buf [ y * South * three + x * 3 + 2 ]; return ( r << sixteen ) | ( grand << 8 ) | b ; } Since the buffer is already in the correct format, writing an paradigm is expressionless simple. I like to flush afterwards each frame so that observers by and large see clean, complete frames. It helps in debugging.

static void ppm_write ( const unsigned char * buf , FILE * f ) { fprintf ( f , "P6 \n %d %d \due north 255 \n " , S , S ); fwrite ( buf , S * three , Due south , f ); fflush ( f ); } Dot rendering

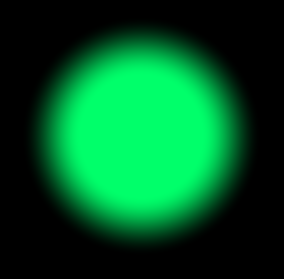

If you zoom into one of those dots, you may notice it has a nice smooth border. Here's one rendered at 30x the normal resolution. I did not render, and then scale this image in another piece of software. This is straight out of the C programme.

In an early version of this plan I used a dumb dot rendering routine. It took a colour and a difficult, integer pixel coordinate. All the pixels within a certain altitude of this coordinate were gear up to the color, everything else was left lonely. This had two bad effects:

-

Dots jittered as they moved around since their positions were rounded to the nearest pixel for rendering. A dot would be centered on one pixel, and so suddenly centered on another pixel. This looked bad fifty-fifty when those pixels were next.

-

There's no blending between dots when they overlap, making the lack of anti-aliasing even more than pronounced.

Instead the dot's position is computed in floating point and is actually rendered as if it were betwixt pixels. This is done with a shader-like routine that uses smoothstep — just as found in shader languages — to give the dot a smooth edge. That border is blended into the image, whether that's the background or a previously-rendered dot. The input to the smoothstep is the distance from the floating indicate coordinate to the center (or corner?) of the pixel being rendered, maintaining that between-pixel smoothness.

Rather than dump the whole part here, allow's expect at it slice by piece. I have two new constants to ascertain the inner dot radius and the outer dot radius. Information technology's smoothen between these radii.

#define R0 (S / 400.0f) // dot inner radius #ascertain R1 (Due south / 200.0f) // dot outer radius The dot-drawing role takes the image buffer, the dot's coordinates, and its foreground color.

static void ppm_dot ( unsigned char * buf , bladder ten , float y , unsigned long fgc ); The kickoff thing to do is extract the color components.

float fr , fg , fb ; rgb_split ( fgc , & fr , & fg , & fb ); Next determine the range of pixels over which the dot will exist describe. These are based on the ii radii and will be used for looping.

int miny = floorf ( y - R1 - ane ); int maxy = ceilf ( y + R1 + 1 ); int minx = floorf ( x - R1 - 1 ); int maxx = ceilf ( x + R1 + 1 ); Hither'south the loop structure. Everything else volition be within the innermost loop. The dx and dy are the floating point distances from the middle of the dot.

for ( int py = miny ; py <= maxy ; py ++ ) { float dy = py - y ; for ( int px = minx ; px <= maxx ; px ++ ) { float dx = px - x ; /* ... */ } } Utilise the x and y distances to compute the altitude and smoothstep value, which will be the alpha. Within the inner radius the colour is on 100%. Outside the outer radius it's 0%. Elsewhere it's something in between.

bladder d = sqrtf ( dy * dy + dx * dx ); bladder a = smoothstep ( R1 , R0 , d ); Get the background color, extract its components, and blend the foreground and groundwork according to the computed alpha value. Finally write the pixel back into the buffer.

unsigned long bgc = ppm_get ( buf , px , py ); bladder br , bg , bb ; rgb_split ( bgc , & br , & bg , & bb ); bladder r = a * fr + ( one - a ) * br ; bladder g = a * fg + ( 1 - a ) * bg ; float b = a * fb + ( 1 - a ) * bb ; ppm_set ( buf , px , py , rgb_join ( r , g , b )); That's all it takes to return a smooth dot anywhere in the image.

Rendering the array

The array being sorted is just a global variable. This simplifies some of the sorting functions since a few are implemented recursively. They tin can call for a frame to exist rendered without needing to pass the total array. With the dot-cartoon routine washed, rendering a frame is like shooting fish in a barrel:

#define North 360 // number of dots static int assortment [ Northward ]; static void frame ( void ) { static unsigned char buf [ S * S * iii ]; memset ( buf , 0 , sizeof ( buf )); for ( int i = 0 ; i < North ; i ++ ) { float delta = abs ( i - assortment [ i ]) / ( N / 2 . 0 f ); float x = - sinf ( i * 2 . 0 f * PI / N ); float y = - cosf ( i * two . 0 f * PI / Northward ); float r = S * fifteen . 0 f / 32 . 0 f * ( 1 . 0 f - delta ); float px = r * ten + Due south / 2 . 0 f ; float py = r * y + Due south / ii . 0 f ; ppm_dot ( buf , px , py , hue ( array [ i ])); } ppm_write ( buf , stdout ); } The buffer is static since it will be rather large, especially if S is cranked up. Otherwise it's likely to overflow the stack. The memset() fills it with black. If y'all wanted a different background color, here's where yous change it.

For each element, compute its delta from the proper array position, which becomes its altitude from the center of the epitome. The angle is based on its actual position. The hue() function (not shown in this article) returns the colour for the given chemical element.

With the frame() role consummate, all I need is a sorting function that calls frame() at appropriate times. Here are a couple of examples:

static void shuffle ( int array [ Due north ], uint64_t * rng ) { for ( int i = Due north - 1 ; i > 0 ; i -- ) { uint32_t r = pcg32 ( rng ) % ( i + 1 ); swap ( array , i , r ); frame (); } } static void sort_bubble ( int array [ Northward ]) { int c ; practice { c = 0 ; for ( int i = i ; i < North ; i ++ ) { if ( array [ i - 1 ] > array [ i ]) { swap ( array , i - 1 , i ); c = ane ; } } frame (); } while ( c ); } Synthesizing audio

To add audio I demand to keep rail of which elements were swapped in this frame. When producing a frame I need to generate and mix tones for each element that was swapped.

Notice the swap() function above? That'southward not only for convenience. That'south also how things are tracked for the audio.

static int swaps [ N ]; static void bandy ( int a [ N ], int i , int j ) { int tmp = a [ i ]; a [ i ] = a [ j ]; a [ j ] = tmp ; swaps [( a - array ) + i ] ++ ; swaps [( a - assortment ) + j ] ++ ; } Before we become ahead of ourselves I demand to write a WAV header. Without getting into the purpose of each field, just annotation that the header has 13 fields, followed immediately by sixteen-bit trivial endian PCM samples. At that place will be only one aqueduct (monotone).

#ascertain HZ 44100 // audio sample rate static void wav_init ( FILE * f ) { emit_u32be ( 0x52494646UL , f ); // "RIFF" emit_u32le ( 0xffffffffUL , f ); // file length emit_u32be ( 0x57415645UL , f ); // "WAVE" emit_u32be ( 0x666d7420UL , f ); // "fmt " emit_u32le ( xvi , f ); // struct size emit_u16le ( i , f ); // PCM emit_u16le ( ane , f ); // mono emit_u32le ( HZ , f ); // sample rate (i.eastward. 44.1 kHz) emit_u32le ( HZ * 2 , f ); // byte rate emit_u16le ( 2 , f ); // block size emit_u16le ( 16 , f ); // $.25 per sample emit_u32be ( 0x64617461UL , f ); // "information" emit_u32le ( 0xffffffffUL , f ); // byte length } Rather than tackle the annoying trouble of figuring out the total length of the audio ahead of fourth dimension, I simply moving ridge my hands and write the maximum possible number of bytes (0xffffffff). About software that can read WAV files will understand this to mean the unabridged rest of the file contains samples.

With the header out of the way all I accept to do is write 1/60th of a second worth of samples to this file each fourth dimension a frame is produced. That's 735 samples (1,470 bytes) at 44.1kHz.

The simplest identify to exercise audio synthesis is in frame() right afterwards rendering the epitome.

#define FPS lx // output framerate #ascertain MINHZ 20 // lowest tone #ascertain MAXHZ 1000 // highest tone static void frame ( void ) { /* ... rendering ... */ /* ... synthesis ... */ } With the largest tone frequency at 1kHz, Nyquist says we simply demand to sample at 2kHz. 8kHz is a very common sample rate and gives some overhead space, making information technology a good choice. Withal, I found that sound encoding software was a lot happier to accept the standard CD sample rate of 44.1kHz, so I stuck with that.

The beginning matter to exercise is to allocate and zippo a buffer for this frame'south samples.

int nsamples = HZ / FPS ; static bladder samples [ HZ / FPS ]; memset ( samples , 0 , sizeof ( samples )); Adjacent determine how many "voices" there are in this frame. This is used to mix the samples by averaging them. If an element was swapped more than once this frame, it's a little louder than the others — i.e. it'south played twice at the same time, in phase.

int voices = 0 ; for ( int i = 0 ; i < N ; i ++ ) voices += swaps [ i ]; Hither'south the most complicated part. I use sinf() to produce the sinusoidal wave based on the element'due south frequency. I also use a parabola every bit an envelope to shape the beginning and ending of this tone and then that it fades in and fades out. Otherwise you lot get the nasty, high-frequency "pop" audio as the wave is given a hard cut off.

for ( int i = 0 ; i < North ; i ++ ) { if ( swaps [ i ]) { float hz = i * ( MAXHZ - MINHZ ) / ( bladder ) N + MINHZ ; for ( int j = 0 ; j < nsamples ; j ++ ) { float u = ane . 0 f - j / ( bladder )( nsamples - 1 ); float parabola = i . 0 f - ( u * 2 - 1 ) * ( u * 2 - one ); float envelope = parabola * parabola * parabola ; float v = sinf ( j * 2 . 0 f * PI / HZ * hz ) * envelope ; samples [ j ] += swaps [ i ] * 5 / voices ; } } } Finally I write out each sample as a signed 16-scrap value. I affluent the frame audio only similar I flushed the frame image, keeping them somewhat in sync from an outsider's perspective.

for ( int i = 0 ; i < nsamples ; i ++ ) { int southward = samples [ i ] * 0x7fff ; emit_u16le ( due south , wav ); } fflush ( wav ); Before returning, reset the swap counter for the next frame.

memset ( swaps , 0 , sizeof ( swaps )); Font rendering

You may have noticed there was text rendered in the corner of the video announcing the sort role. There's font bitmap information in font.h which gets sampled to render that text. It's not terribly complicated, simply you'll have to written report the code on your own to see how that works.

Learning more than

This simple video rendering technique has served me well for some years now. All information technology takes is a scrap of knowledge virtually rendering. I learned quite a bit merely from watching Handmade Hero, where Casey writes a software renderer from scratch, then implements a nigh identical renderer with OpenGL. The more than I learn nigh rendering, the improve this technique works.

Before writing this mail I spent some time experimenting with using a media player every bit a interface to a game. For case, rather than render the game using OpenGL or similar, render information technology as PPM frames and send information technology to the media player to be displayed, just as game consoles bulldoze television sets. Unfortunately the latency is horrible — multiple seconds — so that idea just doesn't work. So while this technique is fast enough for real fourth dimension rendering, it'due south no proficient for interaction.

All data on this weblog, unless otherwise noted, is hereby released into the public domain, with no rights reserved.

Source: https://nullprogram.com/blog/2017/11/03/

0 Response to "Opengl Reading in Ppm Image Is Static"

Postar um comentário